DD12 • The Infrastructure Superintelligence Needs

Six YC startups are somehow, independently, building it. Earth, orbit, the Moon. Physics did the planning.

// ~10 min read • “It's almost like I planned it." — Elon Musk, on SpaceX, Tesla, xAI and Neuralink convergence. He didn't. The physics did. These six startups are proof.

In 2024, I published my picks from YC's S24 batch. On record, before anyone was paying attention. Four kept pulling me back. Starcloud, Spaceium, Zettascale and Entangl. Space refueling stations. Reconfigurable chips. Datacenter design automation. Four companies. None coordinating. Each solving what looked like a different problem.

I kept watching them from their inception onwards. Something kept drawing me back to them. I couldn’t quite name why they belonged together. I just knew they did. So I kept looking. Then I spotted Voxel Energy. GRU Space. Both founded in 2025. Both fitting the same pattern I couldn’t yet articulate. Six pieces. One picture I hadn’t fully seen yet. It took one analogy to make it snap.

A while ago, this dropped on the internet. It's a GPS track of crossing the English Channel. By swimming. When it went viral, a guy on X said what most people were thinking:

“What an idiot. Why not just swim straight?”

I still think about that comment and still wonder whether that was hard sarcasm, cruelty or ignorance. But we could tell that there was a certain level of confidence. The kind of confidence that comes from looking at a map and deciding you understand the ocean.

Here’s what that person didn’t know. Swimming the English Channel has a 16% historical success rate. People train for years and still don’t make it. The S-curves are by design because they are the only way to cross without drowning. The tides run perpendicular to the crossing. Fight them and you drown. Read them and you survive.

The swimmer wasn’t lost. He was navigating forces invisible to anyone watching from the shore.

I think about that comment every time someone looks at a deep tech founder and says: why not just swim straight?

This is how most people look at the founders I’m about to show you. They see the GPS trace. Strange loops. Scattered bets. Orbital data centers next to space refueling stations next to reconfigurable chips. They file it under moonshot and move on.

What they don’t see are the tides.

Those six startups have no shared roadmap. No group chat beyond YC’s Slack. No coordinating memo. Yet they are independently building the same machine.

Every AI lab today says they are building toward superintelligence. Almost none of them have an infrastructure plan that actually gets us there. Think about what superintelligence actually requires. Not as a concept, but rather as an engineering problem.

It needs compute at a scale we haven’t built. That compute needs energy we can’t yet deliver. That energy needs infrastructure that doesn’t exist on Earth at the density required.

That's the problem these six startups are each solving. With or without knowing it.

Looking back at the first principles that birthed SpaceX, Tesla, xAI and Neuralink, Elon Musk sat down with Dwarkesh Patel and John Collison earlier this month. As they dissected the convergence of his empire, he laughed with that familiar, childlike wonder:

“It's almost like I planned it.”

Then he chuckled and added: “But I would never do such a thing.” He engineered each piece separately, intuitively, to solve the immediate bottleneck in front of him. But the physics planned the whole. Nobody knows what superintelligence looks like when it arrives. That’s not the point. What does it need to run on? That one has an answer.

That’s exactly what’s happening here. Except nobody engineered any of it singlehandedly. These YC founders are doing the same. They’re just listening to the same set of universal constraints.

Look at what’s actually being built. Starcloud is moving compute infrastructure into orbit. Spaceium is building the refueling and repair layer that keeps it alive. GRU Space is constructing off-world habitats, the surface layer that makes a permanent presence on the Moon and beyond possible. Zettascale is redesigning chips from the ground up to run on a fraction of the power. Voxel Energy is keeping Earth-based clusters online while the orbital ones scale. Entangl is the connective tissue. It is the layer that stops the whole system from breaking as the pieces start talking to each other.

One orbital compute layer. One servicing layer. One surface layer. One chip layer. One power layer. One coherence layer.

Something is organizing this. This convergence feels surreal. And it's not in any cap table.

That force has a name.

The tides.

There’s only one way to really understand something. Build it from scratch. Or talk to someone who already has. Feynman said it best:

“What I cannot create, I do not understand.”

So let’s build this from scratch.

Tide 1: We Are Running Out of Places to Turn Chips On

Parameters. Benchmarks. Reasoning capabilities. That’s what everyone’s watching. Why don’t we talk about the wall we are about to hit: we are running out of ways to turn the chips on.

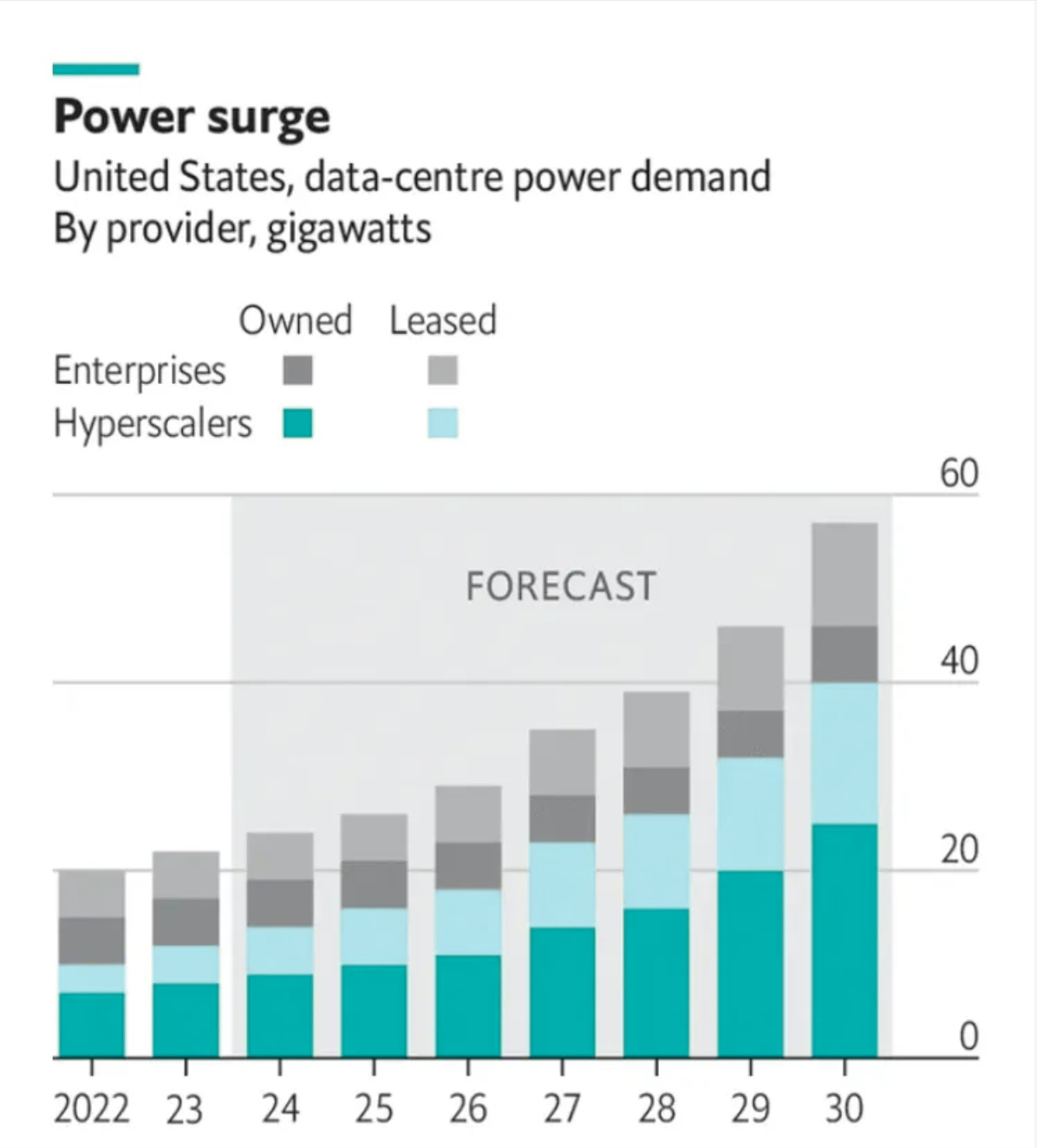

SemiAnalysis puts it plainly: In Texas alone, tens of gigawatts of data center load requests pour in every month. In all of 2025, barely more than a gigawatt got approved. The grid is sold out.

This is neither a planning problem nor a funding problem. You cannot call your way out of this. You cannot lobby physics.

In 2024, Elon Musk’s xAI team built what nobody thought was possible: a 100,000-GPU Colossus supercluster in roughly four months. What really stunned the industry was that all of Nvidia’s infrastructure and software stack went from concept to first training run in just 19 days on a 100,000‑H200 configuration. A process Jensen Huang says usually takes around four years of planning and deployment.

The secret wasn’t a new software trick or a clever procurement hack. Instead of waiting for full grid build‑out, xAI leaned on hundreds of megawatts of on‑site gas turbines installed beside the racks while a 150 MW substation and additional grid capacity were still being finished. While the industry obsessed over the speed, far fewer conversations focused on the fuel bill, emissions and other trade‑offs that came with that choice.

The turbines to replicate that at scale are now sold out through 2030. The limiting factor isn't even the turbines, it's the vanes and blades inside them. There are only three casting companies in the world that make them. All backlogged. You cannot call your way out of this. This is the wall. And this is a 2026 problem not a someday problem.

Three founders looked at this wall and chose a different route. Max Pfeiffer, Evan Schmidt and Casey Spencer at Voxel Energy decided to treat the data centre and its power supply as a single machine rather than two separate problems. Their answer is off‑grid sites with on‑site solar, second‑life EV batteries and a DC microgrid that sidesteps interconnect queues and multi‑year permitting entirely. It is a near‑term, on‑Earth solution. A stopgap, yes, but one that does more than just buy time.

Then there's Starcloud. While some stayed grounded, others looked up. Philip Johnston, Ezra Feilden and Adi Oltean looked at the same wall and went in the opposite direction. They decided not to fight it. They’re just leaving the earth.

Moving the data center above the atmosphere where solar is constant, cooling is free and nobody is selling permits.

Think about what Earth actually is from the Sun’s perspective. The Sun is so large that 1.3 million Earths could fit inside it. We are, from its point of view, a rounding error. A tiny speck intercepting a fraction of a fraction of its total output. And even that fraction? We waste 30% of it just punching through the atmosphere. Then night comes. Then clouds. Then winter. Your solar panel is essentially working part-time on a bad contract.

In orbit, that same panel works full time. No atmosphere. No night. No seasons. Just raw unfiltered sunlight hitting silicon 24 hours a day. The same hardware delivers 5-10x more usable energy. No batteries needed because the sun never sets. Oversimplified? Slightly. Wrong? No.

Remember, it’s always sunny in space.

Same tide, different shoreline. One digs in. One leaves the planet. Space doesn't make everything cheaper today. But the direction of friction is clear. Building on Earth keeps getting harder. Building in orbit keeps getting cheaper. The lines are crossing.

Tide 2: The Hardest Part of Space Isn't Getting There

Everyone thinks of “space” and jumps to rockets. The harder problem isn’t getting there anymore. It’s what happens after.

Launch cost fell ~95–97% in 60 years. The last decade did most of the work from $54,000 per kilogram in the 1970s to under $1,500 today. Getting to orbit is solved. Commercially, reusably, routinely.

What happens after launch is not.

Right now everything in orbit operates under one silent assumption: once it’s up there, nobody is coming. Satellites are engineered for redundancy because repair isn’t an option. Spacecraft carry enough fuel for their entire mission because refueling doesn’t exist. When something breaks or runs dry, you write it off. A $300 million asset becomes space debris because a $20 million service call was impossible.

Think about what that assumption does to design. Everything becomes a one-way bet. Bespoke. Overbuilt. Disposable.

When repair in space isn't an option, everything becomes a firework. Spectacular. One-time. Gone.

That assumption is about to disappear.

Ashi Dissanayake and Reza Fetanat at Spaceium are building fully automated space stations to refuel and repair on-orbit assets. The unsexy plumbing that makes everything else possible. Once refueling exists, three things shift simultaneously: assets stop being disposables and start being infrastructure, operators maneuver freely without burning their one-time fuel budget, and design logic flips from bespoke one-offs to modular serviceable systems built to last.

The industry built everything around that assumption for sixty years. Spaceium is removing it.

Spaceium aims to solve the maintenance problem. Yet there's a deeper one. Permanent infrastructure in space the kind that actually lasts, can't be shipped kilogram by kilogram from Earth forever. At some point you have to build with what's already there.

Every kilogram launched from the ground costs money, time and a launch window. At superintelligence scale, that math breaks.

Skyler Chan at GRU Space is building off-world habitats using in-situ resource utilization, turning local lunar material into building material. Their first project is a habitat on the Moon, opening 2032. Their actual bet is bigger: becoming the construction company that all future lunar and Martian infrastructure depends on. The bet is simple: the best building material on the Moon is the Moon.

Spaceium keeps the assets alive. GRU builds the world they operate in.

Same tide. One fixes what breaks. One builds what’s next.

Tide 3: The Chips Have to Get Smarter Too

Even if you solve energy. Even if you solve orbit. You hit a third wall.

The chips themselves.

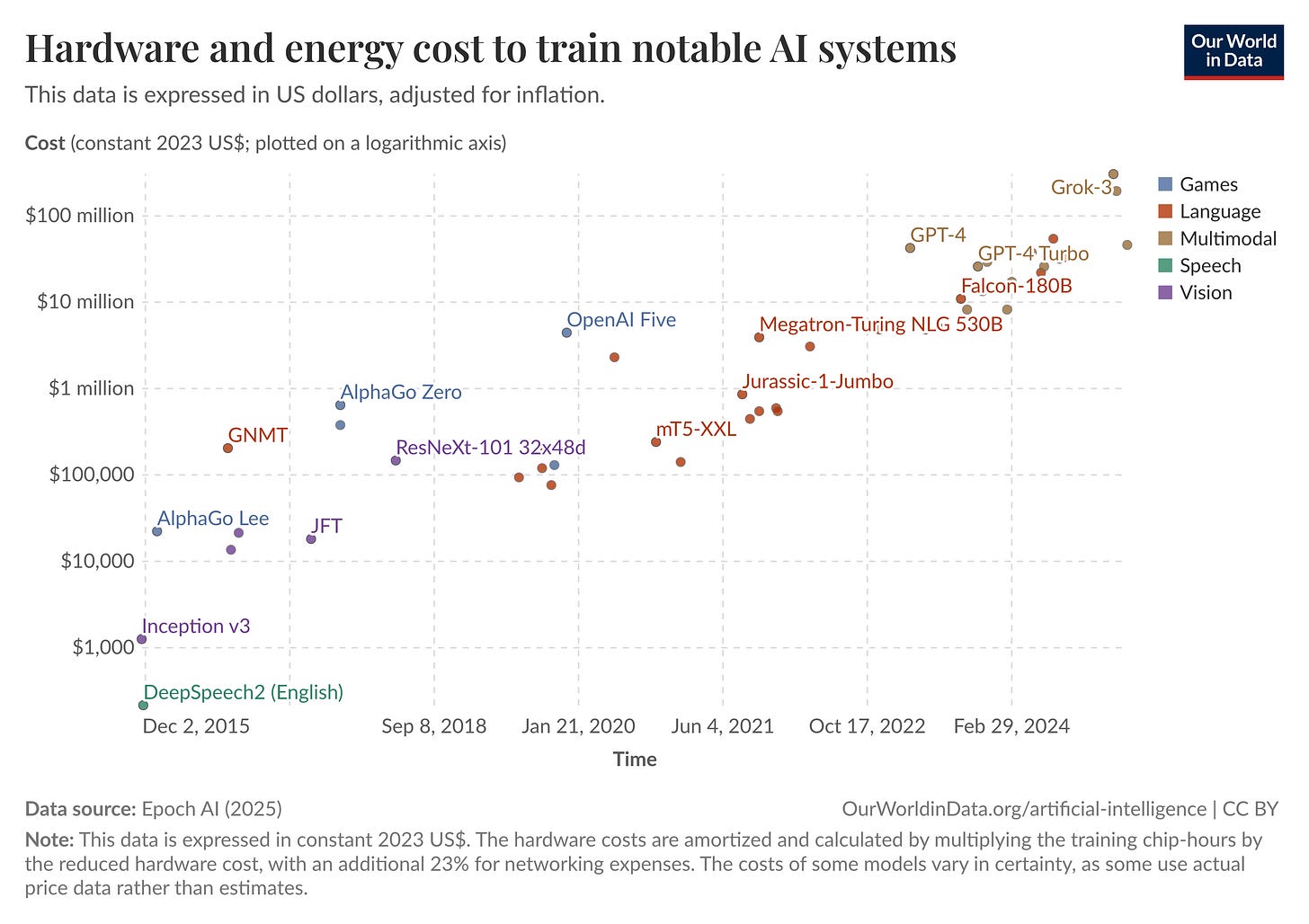

AI training runs are doubling in compute every few months. The infrastructure wasn’t built for this slope. And the power bill for a single frontier training run is now large enough to power a small city (~100,000 homes) for the duration of the run. You can move the data center to orbit. You can build your own grid. But if the chip architecture itself is wasteful, you’re just moving the problem, not solving it.

Efficiency isn’t optional anymore. It’s the constraint everything else is running into.

Elias Almqvist and Prithvi Raj at Zettascale are building energy-efficient reconfigurable dataflow XPUs for AI training and inference. Their claim:

up to around 27.6x more efficient and performant than Nvidia’s H100 on targeted workloads.

I’ll be honest, when I first saw that number I did a double-take. Numbers like that usually mean the benchmark is cherry-picked. But the architecture behind it is genuinely different. Not a faster GPU. A different way of thinking about how computation flows through silicon.

But even the best chip in the world breaks inside a poorly run data center. As the stack gets more complex, chips co-designed with workloads, data centers co-designed with energy systems, orbital infrastructure talking to ground systems, the surface area for error compounds faster than any human team can manually review.

Shapol M and Antanas Zilinskas founded Entangl to detect and automatically resolve issues in data center engineering and operations. Not monitoring. Resolution. The distinction matters. Monitoring tells you something broke. Entangl stops it from breaking in the first place.

Think of it this way. Zettascale is building more energy‑efficient AI accelerator chips. Voxel builds off‑grid, self‑powered data centers on Earth. Starcloud builds them in orbit. Spaceium and GRU make the orbital layer serviceable. Entangl is the nervous system of a data center keeping the whole thing coherent as the pieces start talking to each other.

Without Entangl, you don’t have a system. You have the parts of one.

Co-designing the Stack From Scratch

For decades, building infrastructure was linear. Design the chip. Build a server around it. Build a data center around the servers. Hope the grid shows up eventually. Because up until now, the grid power has been a given. It was treated as a utility problem rather than a core design axis.

Space, if it entered the conversation at all, lived firmly in the “sci‑fi later” bucket, not in anyone’s near‑term build plan.

That logic breaks the moment the tides start biting.

What these founders are building, independently, without coordination, is something different. Chips co-designed with workloads. Data centers co-designed with energy systems. Orbital infrastructure co-designed with servicing and habitation layers. The objective function isn’t “fast chip” or “cheap megawatt” anymore. It’s end-to-end intelligence per unit of real-world constraint.

Three constraints. Six responses. One stack that emerged without a map. On its own.

Over the past decade, training compute for frontier AI models has grown at roughly 4x per year. The infrastructure wasn’t built for that slope. These six startups are building the version that is.

Look what we just built together.

Three Ways This Falls Apart

Every thesis has a breaking point. Here are three places this one might crack.

1. The efficiency escape hatch.

The strongest counterargument against this entire stack isn’t “space is hard” or “the grid will catch up.” It’s that we might algorithm our way out of needing any of it.

DeepSeek‑style compression and efficiency shocks keep happening: models approach frontier‑level capability at a fraction of previous compute budgets. If that curve continues five, ten more doublings of algorithmic efficiency, the infrastructure tide I’m describing might flatten before any of these companies reach operational scale. The skeptics aren’t wrong to point at this. It deserves a real answer.

I sat with this one for a while. Here’s where I land.

Efficiency improvements have never once reduced demand for compute. Not once in sixty years. Every time tokens got cheaper, people used more of them. ChatGPT made inference cheap; inference demand exploded. DeepSeek made training cheaper; training runs got bigger.

Economists have a name for this: Jevons’ Paradox.

In the 19th century, more efficient steam engines didn't reduce coal consumption. They exploded it. Cheaper to run meant more reasons to run them. Compute is following the same logic: every time we make tokens cheaper, we find ten new reasons to use more of them. Every efficiency gain in compute has unlocked a larger appetite for compute.

The tide doesn't flatten. It redirects.

2. The regulatory brake

Governments cap data centers. Slow launches. Tightly regulate training runs. At that point the limiting factor becomes politics, not physics.

This is actually the most likely near-term disruption. Governments don’t need to be competent to change the trajectory of AI. They just need to be slow. And slow is something governments are exceptionally good at.

Right now everyone is modeling the future with physics and capex curves. If we flip from hard constraints to soft ones such as permits, politics, local veto power, the whole system gets rebottlenecked at a layer that’s much harder to engineer around. You can’t first-principles your way past a senate committee.

3. Ambition ceiling

AI plateaus at “very useful” instead of civilization-scale. Not because of physics or politics but because we collectively decide we don’t actually want to build superintelligence. The tides become gentle waves. We stop swimming.

I’m not betting on any of these. But if one hits, it doesn’t kill the thesis. It changes which constraints bind first and where the curves bend.

The tides don’t disappear. They just find a different shoreline.

It Started Here

Where Thinking Happens

I've lived with this thesis long enough that it stopped surprising me. The part that still gets me isn't the technology. It's the timing. Six startups working on different problems and somehow the physics pulled them toward the same answer at the same moment. That's the part that keeps me up.

If the tides are pulling where I think they are, the core of our intelligence infrastructure won’t just sit on Earth. It will live in orbit. On the Moon. Inside habitats that don’t exist yet. The question of where thinking happens, something we’ve never had to ask before, just became an engineering problem.

This is the decade we stopped assuming intelligence belongs to one planet's surface.

N.

PS: The founder conversations are live on YouTube and Substack. The ones missing: coming soon. Next up: Elias Almqvist (Zettascale). Come, think with us.

I wish I'd written this. Smart, well researched, well written; the tides analogy is perfectly on point. Bravo Nihal!

Such detailed information makes space even more fascinating. Those six startups are writing different chapters of the same book without knowing.

Thank you for this information, I totally enjoyed reading this!